To scrape a website means to download it, such as for an offline copy or to do some analysis on it.

For example, if you have a competitor with a strong content marketing game, you might want to use their site’s headlines as a start for your bulk content strategy. That’s what we’ll show how to do throughout this article.

Step 1: Create a new Google Sheet

Go to https://sheet.new/ to create a new Google spreadsheet.

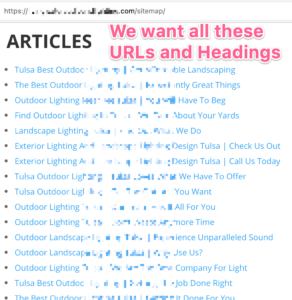

Step 2: Copy the list of links from the target website

If the site you’re scraping has a sitemap, that’s probably the place to start.

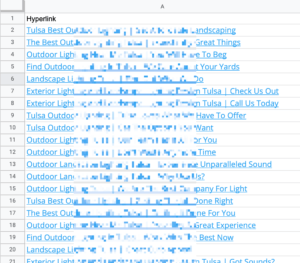

Select all of the ones you’re wanting, then copy and paste into your Sheet. It should come through as a full hyperlink with both the URL and anchor text.

Step 3: Extract URLs and Anchor Text from Hyperlinks in Google Sheets

Both of these steps are optional, but they may assist you for other parts of your process, as you decide…

To extract the text from a hyperlink cell, it’s as easy as this formula (such as in C2, referencing A2): =""&A2

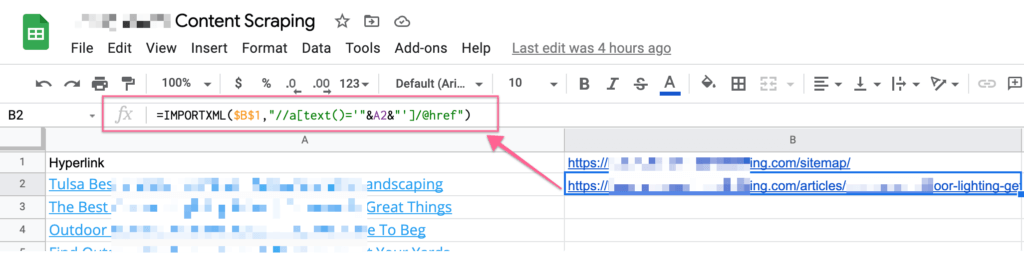

To extract the link’s raw URL (which will be used by other formulas, such as extracting the H2s), set the link to the Sitemap in a cell, such as B1, and then enter this formula, such as in B2: =IMPORTXML($B$1,"//a[text()='"&A2&"']/@href")

That might look intimidating so let’s make sense of it:

- IMPORTXML() is a way to read the contents of a website, then extract what you want from it

- In this case, we’re looking for a link (HTML: <a href=”LINK HERE”>ANCHOR TEXT HERE) where the anchor text matches (the value of cell A2)

- Once found, we get the value of the tag’s ‘href’ part, which is the raw URL

Step 4: Extract the H1-H6 headings

How to extract all of the H2 tags from a URL: =importxml(B2,"//h2")

You can replicate that for H1-H6.

Step 5: Optimize your scraping formulas

If you have dozens or hundreds of scraping requests to make, you’ll run into Google Sheets’ limitations or the website will block the Google Sheets bot from accessing the site, making your calls unfruitful.

(I heard in the past, IMPORTXML() was limited to 50 but in my experience it was 100. See the end of this article for full-on web scraper suggestions.)

Plus, why make 6 different requests when you can make just 1?

Example how to extract the URL and H1-H6 tags into a single scraping request:

=JOIN(" --- ", importxml(A2,"//article//h1 | //article//h2 | //article//h3 | //article//h4 | //article//h5 | //article//h6"))

The other benefit this formula provides is that instead of extracting all the headings into multiple cells below the formula, it keeps it all in a single cell. The JOIN() is how that is accomplished. If this isn’t what you want, remove that from the beginning to still make all 6 extractions in a single scraping request.

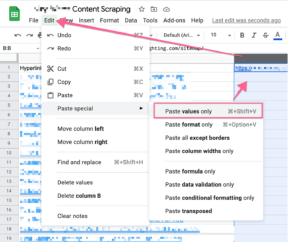

Another recommended optimization is to select all the results (maybe all except the first row’s results so you can keep the formulas), then Paste as Values because this will avoid repeating these scraping calls every time you revisit this Google Sheet, such as closing it today and opening it again tomorrow. You don’t want every visit to the sheet to fire off hundreds of scraping requests.

Bonus – Step 6: Use the Headings for your own inspiration

You might want to do some content analysis on the URLs or just the headings to identify their keyword density.

You might want to spin your own content, such as via search and replace or prepping for a content spinning tool by adding alternatives in certain places.

You might want to plug these bullet points into an Artificial Intelligence (AI) copywriter tool to come up with your own long-form content with their headings as the inputs.

You might want to pass your list along to a human copywriter as a great head-start on idea generation.

References

- BenCollins’ article from 2015, is a bit outdated in minor ways but might have some nice extras, depending on what you’re trying to accomplish

- deux.io’s article from 2014 (the French translates okay into English) has some unique tips and examples (like importing via RSS), including showing how to use Chrome Inspector’s handy “copy Xpath”!

- The IMPORTXML() function requires Xpath notation, and DevHints.io has an Xpath Cheatsheet

- CSS2XPath Converter may be helpful to you

Don’t want to mess with Google Sheets and its limitations? Try ParseHub (computer app), which integrates with Zapier and Zoho Flow, or Apifier (cloud-based) is another alternative and integrates with Zapier natively and has webhooks. ParseHub is easier if you don’t know any code, as it’s a visual builder. Both have free level plans.